In the last year, chatbots powered by Large Language Models (LLMs) are everywhere and even … useful. But how do they work?

In the last year, chatbots powered by Large Language Models are everywhere and even … useful. But how do they work?Large language models are the fundamental architecture behind chatbots like ChatGPT or Bard.

A question typed in to ChatGPT, such as “What is the capital of France”, has to be processed by an LLM in order to produce an answer like “The capital of France is Paris”.LLMs, like some other sorts of artificial intelligence, are inspired by - but do not recreate - the way human brains work. The basic structure of these models consists of nodes and connections. The nodes in the large language model are, in essence, words, and the basic task of the model is to map the"distances" between words, with the output being"the word mostly likely to come next". The words, and in some cases parts of words, such as the plural marker"s" or the prefix"un-", are stored in the model assuch as . That one is for Paris. The first number represents distance and direction east-west from Greenwich. The second does the same for distance from the equator.also tell us about the relationship between the places. Paris and Orléans's numbers are close together, because the two places are close together. Using some simple maths, we can derive from thosein the model similarly place them in a"space", and similarly encode how"close together" they are in that space. But the distances are not only expressed in two dimensions as map references, nor in three dimensions as in this cloud ...in one LLM. We don't know exactly what the dimensions encode, but they may be things like"substitutability" - on that dimension"happy" would be close to"sad", even if on another dimension they might be very far apart. The basic predictive text in SMS apps, in contrast, only really has one dimension; what is the word which most commonly, in all scenarios, comes next. But crucially an LLM is still, deep down, only figuring out what word - or sequence of words - is most likely to come next. It can do so much better than predictive text for two related reasons;"transformers" and"attention".are in its utterance. If you ask"What is a tidy thing to eat pasta with?" and "What is a nice thing to eat pasta with?" the LLM will start typing its answer... In each case the LLM gets the first few words from rearranging your question into a response. Now it just has to find the most probable next word. First the LLM weights all the relationships between all the words it knows, in thousands of dimensions, based on its immense corpus of training data. But then, crucially, it looks at what words have come before and reweights those associations. It is possible that within the model's computations,"Tidy" has a close relationship with"utility" and"tools" and that informs the output. . Indeed the word"with" itself will be reweighted by the preceding terms to lie closer to one set of its alternatives, such as"using","by means of". In the second sentence"nice", it seems, has relationships with"taste" and"flavour" and the word with gets reweighted to lean closer to its other alternative,"accompanied by". That reweighting step is what the LLM technicians call a “transformer”, and the principle of re-evaluating the weights based on the salience given to previous bits of the text is what they call “attention”. The LLM applies these steps to every part of a given conversation. So that if you ask “What is the capital of France?” it can re-evaluate capital to probably mean “city” not “financial resources” when it gets the added input of “France”.?” it has already assigned enough salience to the idea of “Paris ” that it can conclude that “there” is standing in for “Paris”. Attention is widely considered a breakthrough development in natural language AI, but it doesn’t make for the successful models on its own. Each of those models then goes through extensive training, partly to master the question-and-response format, and often to weed out unacceptable responses – sometimes sexist or racist – that would arise from an uncritical adoption of the material in the training corpus.Most of the visualisations are illustrative but informed by conversations with industry experts, to whom thanks, and by interaction with publicly available LLMs. The vector for happy is from the BERT language model using the transformers Python package.

Australia Latest News, Australia Headlines

Similar News:You can also read news stories similar to this one that we have collected from other news sources.

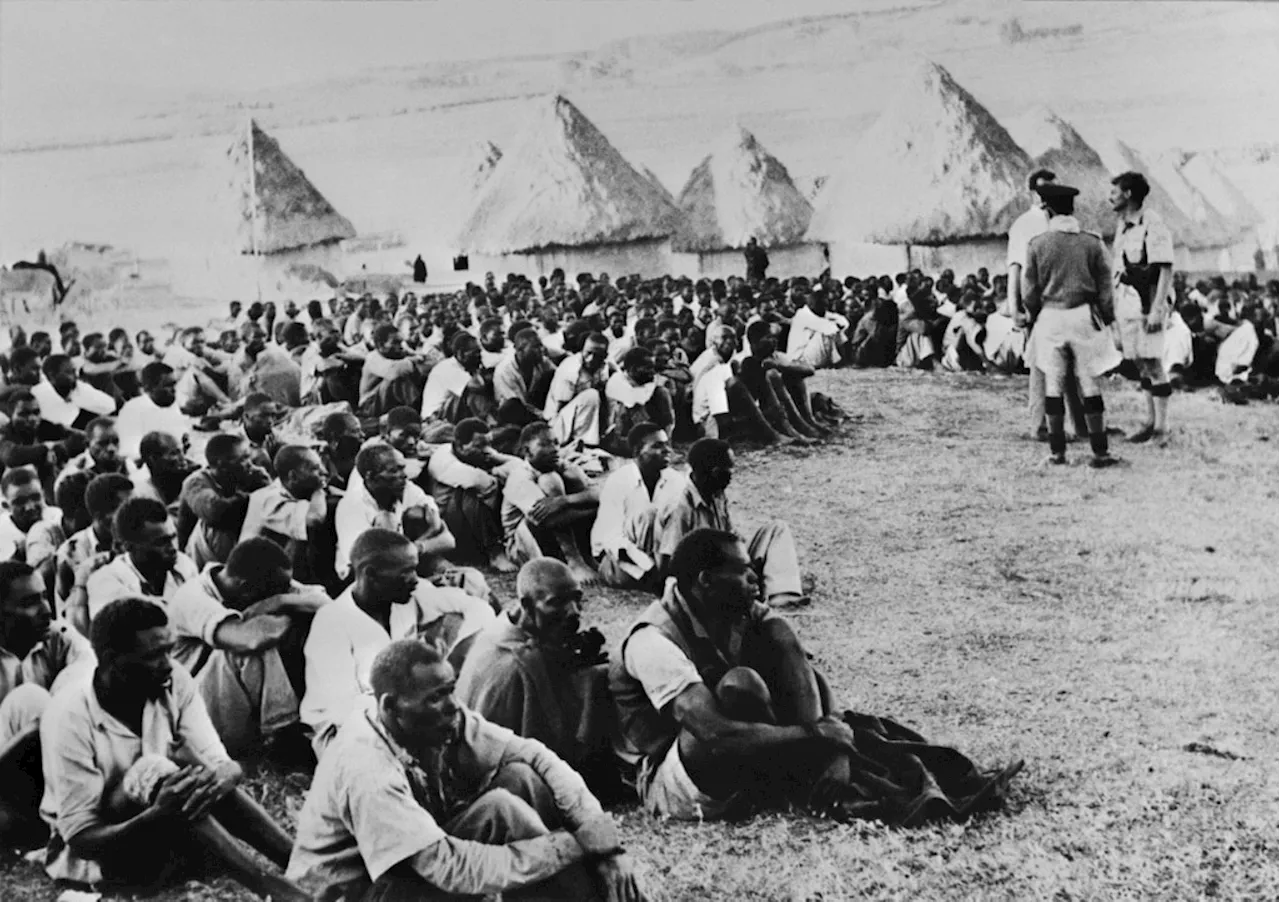

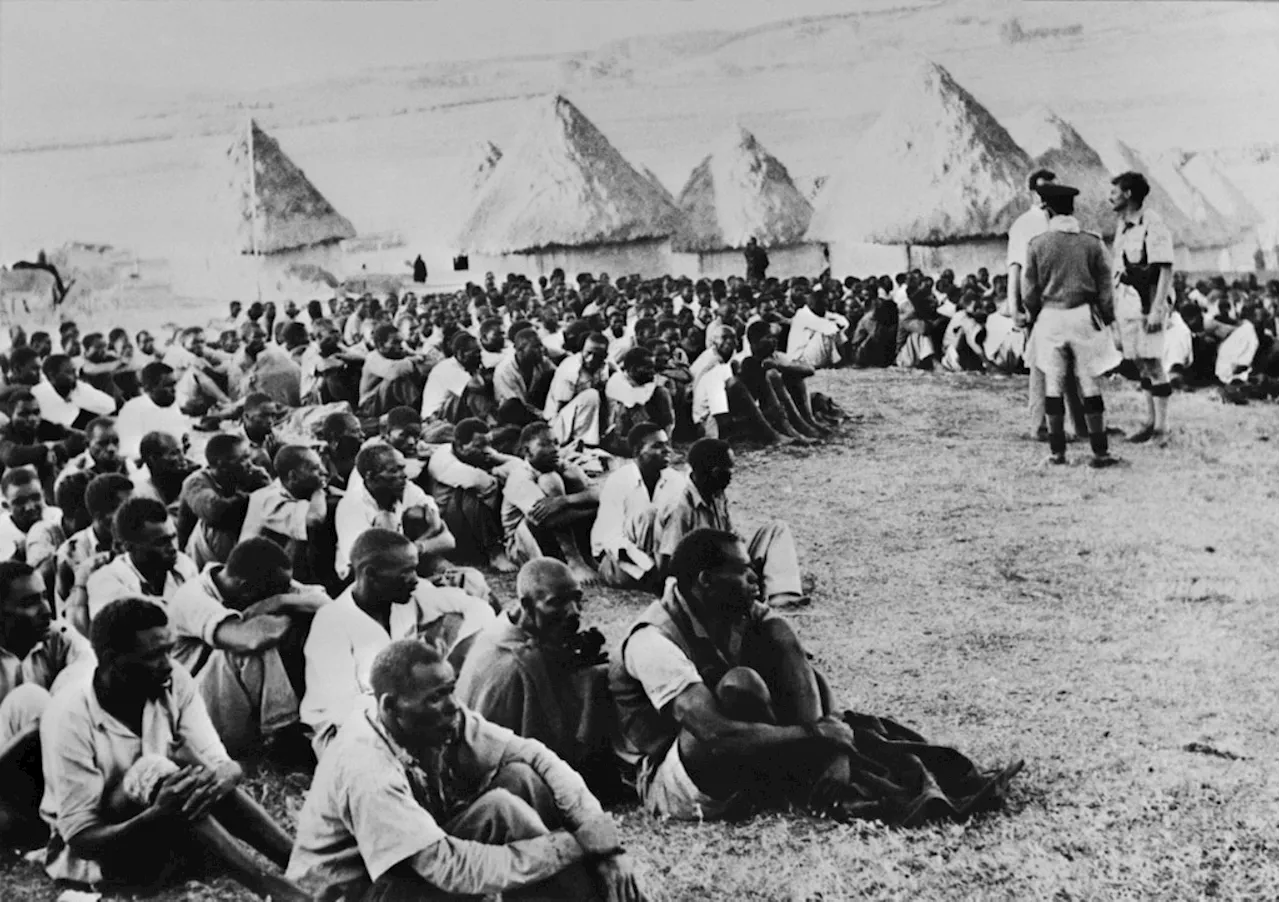

King Charles visits Kenya as colonial past looms largeKing Charles III begins a state visit to Kenya on Tuesday, where he will be confronted by widespread calls for an apology over Britain's bloody colonial past.

King Charles visits Kenya as colonial past looms largeKing Charles III begins a state visit to Kenya on Tuesday, where he will be confronted by widespread calls for an apology over Britain's bloody colonial past.

Read more »

King Charles visits Kenya as colonial abuses loom largeKing Charles III paid a solemn visit Tuesday to the birthplace of independent Kenya, at the start of a trip clouded by calls for an apology over Britain's bloody colonial past.

King Charles visits Kenya as colonial abuses loom largeKing Charles III paid a solemn visit Tuesday to the birthplace of independent Kenya, at the start of a trip clouded by calls for an apology over Britain's bloody colonial past.

Read more »

Israeli airstrikes level apartments in Gaza refugee camp, IDF troops battle HamasFootage of the scene from Al-Jazeera TV showed at least four large craters where buildings once stood, amid a large swath of rubble surrounded by partially collapsed structures.

Israeli airstrikes level apartments in Gaza refugee camp, IDF troops battle HamasFootage of the scene from Al-Jazeera TV showed at least four large craters where buildings once stood, amid a large swath of rubble surrounded by partially collapsed structures.

Read more »

Large scale search for missing fishermanA large scale search is underway for a man reported missing after two other fisherman were rescued when their boat was late returning to shore.

Large scale search for missing fishermanA large scale search is underway for a man reported missing after two other fisherman were rescued when their boat was late returning to shore.

Read more »

Victorian Government Releases 241 Annual Reports on 'Dump Day'The Victorian government releases a large number of annual reports on a single day, overwhelming journalists and hindering transparency and scrutiny.

Victorian Government Releases 241 Annual Reports on 'Dump Day'The Victorian government releases a large number of annual reports on a single day, overwhelming journalists and hindering transparency and scrutiny.

Read more »